There’s a moment every developer eventually hits when they stop treating AI as a magic oracle and start asking: okay, but how does it actually work?

It’s the technology equivalent of learning Santa isn’t real. A little wonder leaves the room — that slightly thrilling sense that something out there is smarter than you and you can’t quite explain why. But something better moves in to replace it: understanding. And understanding, it turns out, is a surprisingly effective antidote to the kind of fear that has people picturing Skynet every time a chatbot gives a confident answer.

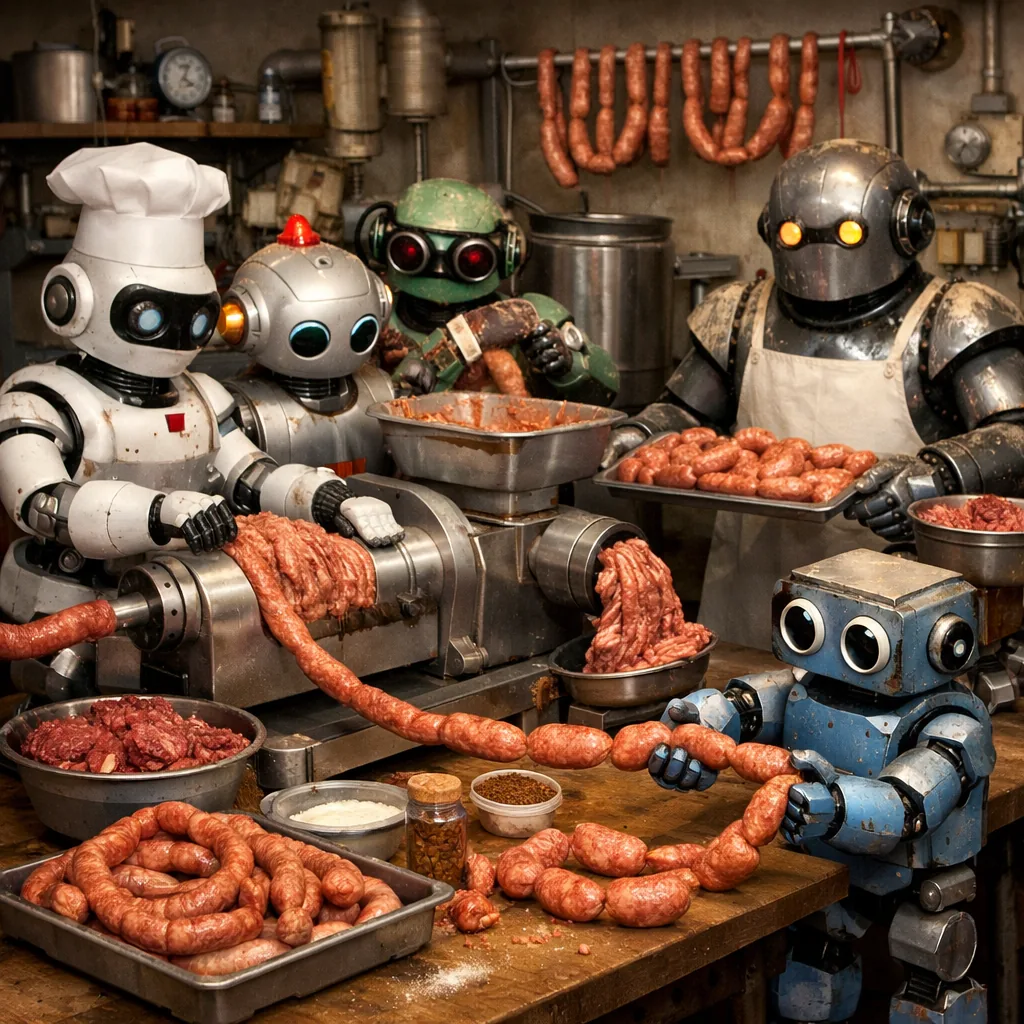

The Sausage Factory Floor

Let’s talk about what a large language model actually is, stripped of the marketing.

At its core, an LLM is a system trained to predict the next token in a sequence. A “token” is roughly a word fragment — “the”, “quick”, “brown”, “fox” might each be a token. The model reads everything that came before and asks: given all of this, what word fragment should come next?

That’s it. That’s the magic trick.

The model was trained on a staggering amount of text — hundreds of billions of words scraped from books, websites, code repositories, research papers, forum posts, and everything in between. During training, it saw a sentence, tried to predict the next word, checked whether it was right, and nudged its internal parameters — billions of floating-point numbers — in the direction that would make it slightly more right next time. Repeat this process trillions of times across petabytes of text, and you end up with a model that has encoded an enormous amount of statistical structure about how human language and knowledge is organized.

When you send a message to an LLM, it doesn’t “think” the way you do. It doesn’t pause and reflect. It doesn’t have opinions it’s concealing. It generates a response one token at a time, each token chosen by running your entire conversation through its billions of parameters and sampling from a probability distribution over its vocabulary.

It is, at its heart, an incredibly sophisticated autocomplete.

Why This Kills the Terminator Fantasy

Here’s where knowing how the sausage gets made becomes genuinely useful: it makes certain fears obvious nonsense.

The Terminator scenario — AI wakes up, decides humans are a threat, and exterminates us — requires the AI to want something. It requires the system to have goals that persist beyond a single conversation, to model its own existence, to feel threatened, to plan. LLMs don’t have any of that. There is no “awake” state between prompts. There is no continuous experience. The model doesn’t remember yesterday. It doesn’t have a survival instinct. It has weights — billions of numbers — and when you give it input, those weights produce output. Then it stops. There is no “it” persisting between conversations, scheming.

The fear of AI sentience misunderstands what sentience requires. Sentience isn’t just sophisticated pattern matching. It’s self-awareness, persistent subjective experience, drives, desires. Current LLMs have none of that by design — not because no one thought to add it, but because the architecture doesn’t give rise to it. You can’t become self-aware by predicting the next word.

This doesn’t mean AI has no risks worth taking seriously. Misinformation, bias baked into training data, overreliance on confident-sounding wrong answers — these are real concerns. But they’re engineering and social problems, not existential ones. Knowing how the model works helps you know which problems to actually worry about.

What It Actually Knows — and How

When an LLM answers a question about, say, the French Revolution, it isn’t retrieving a stored fact file. It learned the shape of how information about the French Revolution gets expressed in text — the associations between words like “Robespierre,” “guillotine,” “1789,” “Reign of Terror” — and it produces an answer that fits that learned shape.

This is why LLMs are uncannily good at some things (summarizing, explaining, rephrasing, connecting concepts across domains) and surprisingly bad at others (precise arithmetic, reliably citing sources, knowing what happened last week). Their knowledge is distributed, associative, and statistical. It’s not a database with rows and columns. It’s more like a vast web of learned correlations, weighted by how often ideas appeared together in training data.

Understanding this also explains hallucinations — when a model states something false with total confidence. It’s not lying. It doesn’t know what truth is in any philosophical sense. It’s producing text that fits the shape of a confident, knowledgeable answer, because that shape is what it learned from millions of examples of confident, knowledgeable writing. The plausibility of the output and its accuracy are related but not the same thing, and the model has no internal fact-checker comparing the two.

But Wait — Does Any of This Sound Familiar?

Here’s where the rabbit hole gets genuinely interesting.

Much of what we’ve just described — pattern recognition trained on massive experience, associative retrieval, statistical inference, no explicit fact database — also describes, at least loosely, how the human brain is thought to work.

Neuroscientists have long theorized that the brain is fundamentally a prediction machine. The predictive processing framework, associated with researchers like Karl Friston and Andy Clark, proposes that the brain is constantly generating predictions about what it expects to sense next — and that perception, thought, and action are all fundamentally about updating and acting on those predictions. You don’t passively receive reality; you actively predict it, and you notice when reality surprises you.

Memory, too, is not a tape recording. It’s reconstructive. Every time you recall something, you’re partly rebuilding it from fragments using learned patterns. Eyewitness testimony is unreliable for exactly this reason — confident, coherent, and wrong, just like an LLM hallucination.

This doesn’t mean human brains and LLMs are the same thing. They clearly aren’t — in architecture, substrate, the presence of embodiment, the continuity of experience. But the similarities suggest something interesting: maybe “intelligence” and “understanding” at some level always look like very sophisticated pattern matching over very large datasets of experience. Maybe the magic was always statistical.

What’s Left When the Magic Goes

Learning how LLMs work does remove something. There’s a romance to the idea of a mysterious intelligence out there — alien, powerful, possibly dangerous. That narrative is compelling. It sells movies. It gets people to read articles.

What you get instead, when you look inside the machine, is something arguably more interesting: a mirror. A system that reflects back the structure of human knowledge, compressed and reorganized by a training process we designed. When it sounds wise, it’s because wisdom was in the training data. When it sounds confused, it’s because the training data was confused on that topic, or the question didn’t fit any shape it had learned.

The fear goes. The wonder can stay, just redirected — toward the genuine marvel of what can emerge from predicting the next word billions of times, and what that might tell us about ourselves.

If intelligence really is sophisticated pattern-matching all the way down — in silicon and in neurons alike — what does that change about how you think of your own thoughts?